Discussing the Community Builder Epoch 1 RPGF results

Ahead of anything further, I want to thank all Community Builders (CBs) for being a part of the first Community Builders RPGF round. An impressive collective effort was made by those who stayed on. I'm personally very pleased with both the results and the final crop of those who remain a part of the program, in addition to what the results show us, which will be what we discuss here.

The final group currently comprising the program ended up being some 172 candidates offering varying levels of contributions across several skill sets. This made qualitative evaluation difficult, but the voting structure that I spoke to the program employing in this article did a fair job of using social consensus. The results speak for themselves, barring some fuzzy edges to the logic of the program's execution - some of which I hope to address in this article - and the results from this voting round (epoch) will aid us in understanding what we hope to see in the community we foster around Namada.

Some Context

There's a fun exercise that you can play with a jar of sweets - you ask a room full of people (usually it's a classroom) to cast an estimation on the number of sweets that are inside the jar. Usually, this is a competition to see which single person can guess the correct answer for individual payout (sometimes the sweets themselves!), and is intended as a demonstration of estimation.

However, the secondary effects of having a whole room make a guess are a little bit more fascinating: if the estimations made by the collective of students who submitted their guesses are summed and divided by the number of people in the classroom, in a perfect setting this estimation is usually shockingly close to the actual number of sweets in the jar. This phenomenon was popularized by James Surowiecki's studies on the 'Wisdom of Crowds' - showing that collective computation can often lead you to results that are harder to discern on an individual level.

This was the same logic behind proposing we allocate 40% of the voting power to the community of CBs who participated in the program. While centrally evaluating all submissions could have led to the highest level of quality control overall, it would have been extremely time-consuming to do so, and approaching the results from an involved perspective means collecting an aggregate (and hopefully more informative and unbiased) opinion.

As a result, the contributions of the program were not assessed by only Early Stewards, but by 172 community participants whose opinions were represented and whose votes were counted as valuable. I expect that the results reflect an accuracy that I didn't think the Early Stewards could perform on their own.

The Results

As a reminder, the first round of Community Builders social consensus voting used the Web3 application Coordinape to allocate differing vote weights to the community's opinion of contributors to the program. This was proportioned with 60% of the vote weight in the hands of the Early Stewards (ES - a hand-selected collective of core contributors to Namada) and 40% of the vote weight allocated to the CBs themselves, with the final results informing the balance of the program's 10mNAM to be distributed among participant CBs. Program participants had 1 week to allocate their votes to one another, with the ES (Early Stewards) ineligible to receive tokens due to their relationship to the protocol's development.

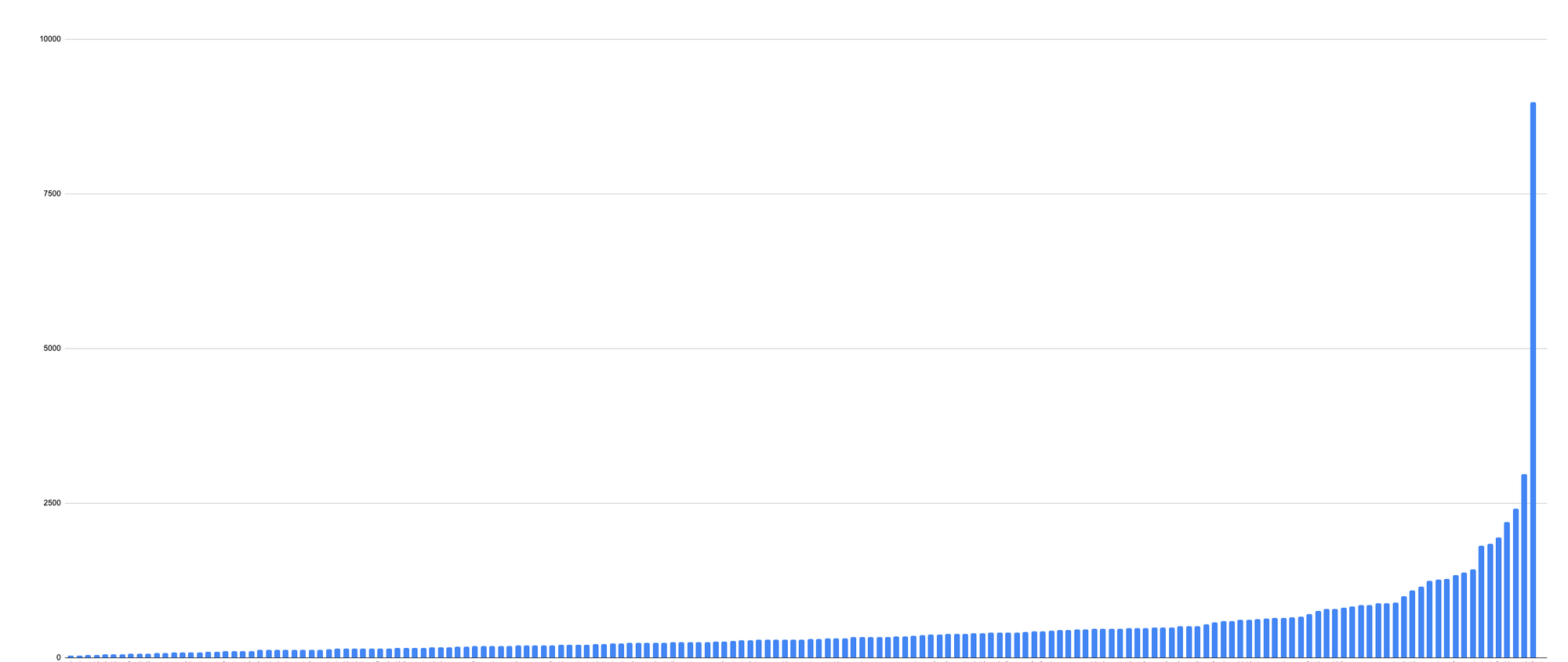

With the votes finally in, our first pass of the results shows that the community did favor community members who have been a part of the Namada community for a long time and who have staked their name within the community over this time by being strong collaborators. This is evidenced by the following graph, with 40% of total vote GIVE weight allocated to the top 17 (or 10%) of Community Builders.

Preliminary Reactions and Discussion

At first glance, the distribution shows us that the contributions distribution is a reflection of certain community members having been very active members of our community and being recognized for this by others, and it's important to center this before adding other level of interpretation. While it's important to control for the ES votes to account for having tipped the scales of this distribution, it still reflects an analytically useful outcome for signaling toward those who merited the most attention, and we envision that the effect of ES votes will decrease as the total GIVE percentage allocated to ES decreases in proportion to the community in future rounds.

Second, it's not quite a question of accumulated points - the allocation of GIVE reflects community sentiment around what all 176 voting members found to be valuable to the future of Namada. This is fundamentally a very good thing that we should encourage far more of. Nothing is saying that Namada will continue on using Coordinape as a method for allocating NAM for Community Builder programs (that will be the subject of discussion with those who stay on), but for now, these results are extremely promising because they show us who the community sees as being the most impactful, and they orient us positively toward understanding who we should be asking to stay within the Community Builders' Program.

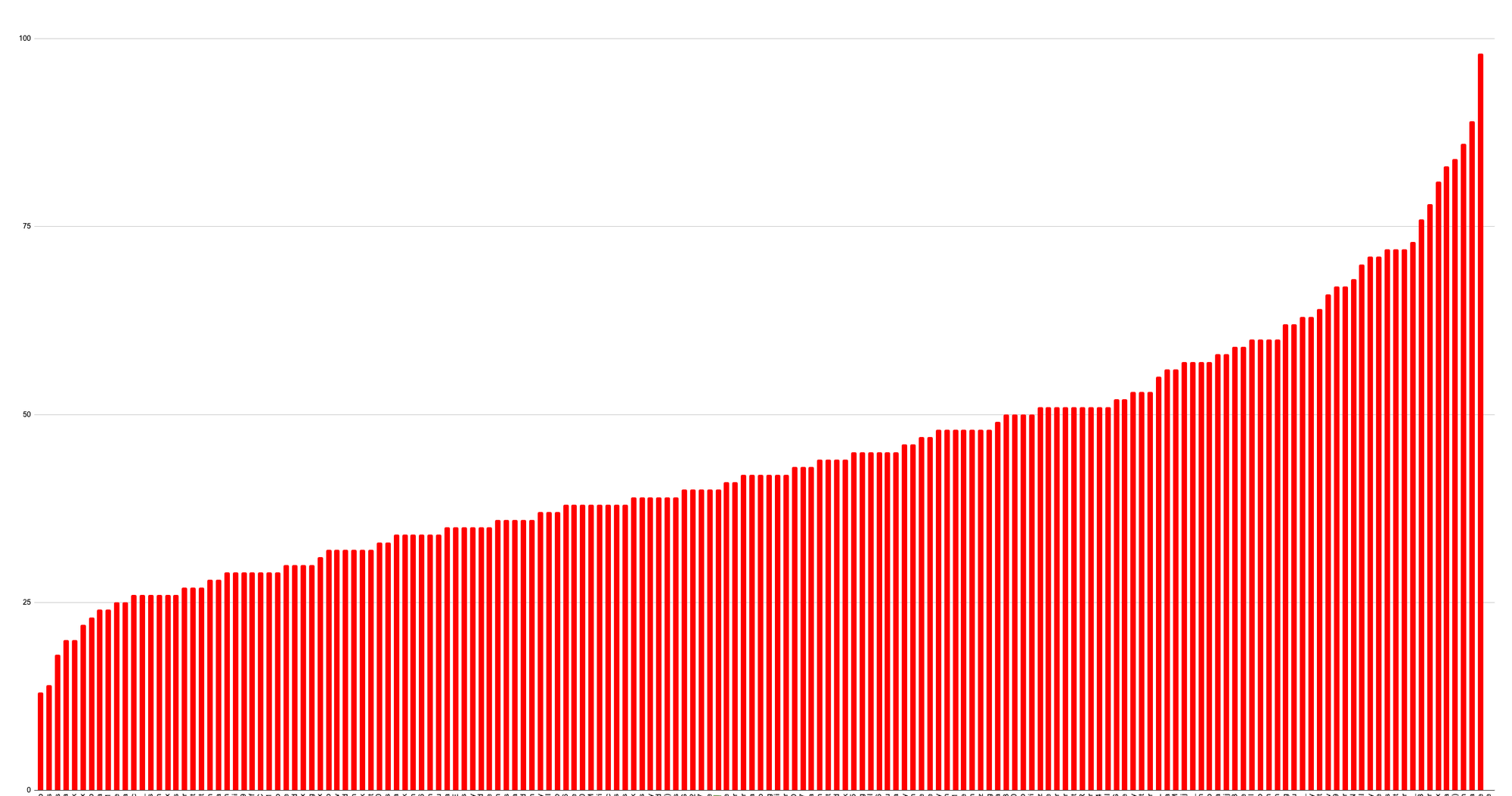

This is evidenced as well by the contrast in distribution for how many givers allocated to select CBs:

Without naming names, we can see that the distribution of voting actions is overall more normalized than individual token amounts - it reflects a well-connected network. There are some exciting implications for this that I'll discuss in a future article, but it suffices to say that the distribution of tokens AND voting actions reflect that the CB program served to at least initially connect many of those who participated in the CB program.

Voting Cartels

Ahead of voting, I received messages from a few CBs worried that implementing the voting structure used in the program would encourage the formation of cartels (cartelization, as I'll refer to it from here on out) of individuals who would vote only for one another rather than - likely encouraged on by an expectation of the accumulation of rewards. It looks as though people did actually do this, but I'd guess anything the effects were marginal due to the fixed number of votes per participant.

Despite these cartels, it looks as though the results played out as expected - we still have the signals necessary to determine who we want to stay on in the program by using the voting metric and weighing against qualitative assessments such as contributions and collaborative skill-will. The equal distribution of votes likely normalized votes into the lowest possible tiers of GIVE allocation (e.g. the bottom 40% (69) of CBs only received 10% of total GIVE (10231) at an average of 148GIVE each - a lot of effort for little return, where collusion was in their midst). The net effect of any defections I expect to see in the final analysis will be interesting because of this - but so far the results effectively met the expectations I had starting.

Remarks and Next Steps

For the most part, the best contributions trended toward useful, meaningful, and mission-aligned work, with most others either falling into a camp of 'high quality but inaccurate' or 'low quality and accurate' through 'low quality and inaccurate', which unfortunately wasn't revealed until Early Stewards evaluated as much as what was submitted (managing ongoing work by 250 initial participants, it turns out, is really difficult).

From here, the Early Stewards will have to get together to assess which Community Builders will be asked to stay on in the program based on these voting results and some secondary qualitative assessments, which should lead us toward the next stage of what Namada's community will become. Many more projects are on the horizon for Community Building, and Namada's future is particularly bright having found people who want to be there to make it happen.